A few days ago a co-worker messaged me and asked about a company we were working with. Confused, I replied that I didn’t know them. He then said that he had pulled that information from a meeting summary made by AI. I had stopped reading those as they were full of fabrication; on review, the notes had credited me with work I had not done, declared a release date on a product that was pure fabrication, as well as made up a company that did not exist.

Earlier this year, I was at a friend’s house, and he was extolling the virtues of Arc Raiders and trying to get me to play it. I’d played the developer, Embark’s, previous game The Finals. Besides being shit at it, the thing that hurt me the most was the utterly atrocious voice “acting”. There was an uncanny valley to the characters’ voices that put me on edge whenever I heard them talk. The talking seemed human, but I was hearing aliens approximating speech and trying to integrate themselves, like the pod creatures in the film Invasion of the Body Snatchers.

I explained all of this and as I did so, my friend looked me in the eye, gave me a smile and shrugged. “I know better than to talk to you about this when you have set your mind on it”. He was making a playful dig at, what he perceived as, my stubborn reactionary nature.

These voices in The Finals were AI-generated, using a text-to-speech program that the developers were very proud of. When there was some push-back from the voice actors’ union at the time, they were quite adamant that “Making games without actors isn’t an end goal…”.

Arc Raiders used the same technology, and it seems like they have rolled back that original statement a little, and now the boss is like ‘actually using AI is good, and we are going to use it more’. (ed note – Arc Raiders dev Embark have, since this was written, started to replace AI voices with real people, at least to a degree)

These statements and backtracking are deeply troubling, because there are wider issues with Gen AI. Bear in mind that I am just a weird, little guy on a website called Xbox Tavern, but my concerns can be broken down into 3 parts:

1. The Environment

Le Monde reported that data centres consumed 20% of all metered electricity in Ireland. The Guardian has reported on a Dutch Economist’s assessment of carbon emissions and water consumption: it estimated that the water consumption of data centres was 765 billion litres. The other estimate it made was that each data centre could generate as much carbon emissions as 24,000 homes. There are other reports that this will be accelerated by the US administration removing restrictions on emissions.

With all of this troubling reporting it should have been heartening that Google reported in 2024 that it had managed to reduce its emissions from data centres by 12%.

Just before that report came out Google, though, pivoted to say that their climate goals were “now more complex and challenging across every level – from local to global”.

These movements seem craven, the acts of greedy corporations destroying the world at an alarming rate for the sake of profit. Except, it isn’t really clear where the money is coming from or going.

2. The Economy

I cannot properly articulate the Ponzi scheme that seems to be going on with AI. Instead I will rely on Ed Zitron to unpack that with a million different receipts.

What I do know is that, although history doesn’t repeat, it certainly rhymes. The last time we were in this kind of moment it led to a recession, and the recession AI is likely to cause will likely involve some more companies that are ‘too big to fail’. What that means for everyone else is that, if and when these companies fail, governments will be expected to step in (like they did with the banks) and it will be the people like you and me who suffer – not the companies burning this digital money like it is made of paper.

Because history loves to rhyme in couplets, I expect that these ‘too big to fail companies’ will take those bailouts and do what they always do: maintain their CEO’s bonus and proceed to strip people of their homes and money.

It makes it super fun to see the UK government dropping money into this endeavour, meaning that they are monetarily incentivised to make sure this continues.

Everyone is assuring us that profit is just around the corner, the more sanguine defenders will do things like argue that Uber and Doordash still barely make a profit, so AI bleeding us dry of money and resources is fine, because eventually it will be profitable and good.

Which leads me to…

3. The Accuracy/Reliability

Every single person that has used any of the AI agents has seen them get things horribly, confidently wrong. It has taken things like speech-to-text in meeting notes and, somehow, made them terrible.

There was a joke post about how ChatGPT and its ilk were 100% correct about things that the poster knew nothing about, but got lots wrong about anything that they even had a modicum of understanding about.

For all the tweaks and adjustments that have been made, ChatGPT, the flagship of AI, is still wrong 1 out of 3 times. If you listen to Microsoft, it tells you that it can range from 8-83% on healthcare, but it is 91.4% accurate on the Massive Multitask Language Understanding (MMLU) benchmark.

Sounds great, if you don’t immediately look at the Wikipedia entry for MMLU that lists its limitations as “Data contamination also posed a significant threat for this benchmark’s validity; companies could easily include questions and answers into their models’ training data, effectively rendering it ineffective.”

Cool.

It is true that no human is 100% correct, even if they cheat at the tests like the above link suggests. The difference is that, while humans may not be reliable, they are held accountable when they mess up. This is something that every company touting AI right now is trying to dodge.

Any person that was consistently wrong 30% of the time and gave potentially life-threatening advice would (in normal non-apocalypse times) get fired, or worse. But when a self driving car kills someone, the company that built it with these errors immediately shifts the responsibility to the human in the vehicle. When a teenager is coaxed into committing suicide, the company that built that large language model didn’t remove that program from the market and instead is likely to go to court to blame the victim.

The company admitted the following:

“Sam Altman, alleging that the version of ChatGPT at that time, known as 4o, was “rushed to market … despite clear safety issues”.

(Please note that when a girl encouraged her boyfriend to kill himself, the texts were enough to put her in prison for up to 20 years.)

Not only can we not trust what AI is doing but, when we have clear evidence of malpractice, the conglomerates that are invested in it circle the wagons and try to do as little as possible to rectify the issues.

So, why do we believe anything that is being said? Why do we defend the small steps that companies take to remove a human from the equation, when there is no intent here other than to make money? The argument is that things will eventually get so much better that we’ll forget that we ever lived without it.

When you pull off the mask it is capitalism at it again

The problem is that AI is currently following the path of most major tech ‘advances’ and disruptions. As Cory Doctorow described it in his novel, Enshittification:

- Tech tries to attract and lock in customers using investor money to undercut whatever it can

- It locks in businesses to sell products to their customers

- Tech then puts the screws to their customers, followed swiftly by putting the screws to the businesses

- Tech keeps making tweaks to the last step until as much of the money can be redirected back to themselves.

You can see this practice in services like Uber, Netflix, and my favourite service, Game Pass. The difference I see between AI and those I’ve listed is that the initial release of these services provided something that people could tangibly want. Each service got worse, more expensive, and added ways to exploit customers.

AI services are still in the first stage, and they’re starting with a product that is not good, that doesn’t offer any tangible benefits to the end user. This isn’t going to get better, only more expensive and less satisfying.

Just two days ago as of writing, Amazon announced that they were going to partner with Flock, a service provider for ICE, to add their ring cameras to an AI network. The recent Super Bowl advert says it is to find dogs, but even WeRateDogs knows that is nonsense.

To use gaming terms, we are witnessing a speedrun towards exploitation.

A metaphor I have been using is that AI is a hammer, but the companies selling it are trying say that the hammer can do anything. Hammers are very good at nailing things down (and murder) but everything else they kind of suck at. When the AI bubble implodes – hopefully before it takes the planet with it – my hope is that people will let what remains of AI do what it does best: be a hammer and not much else.

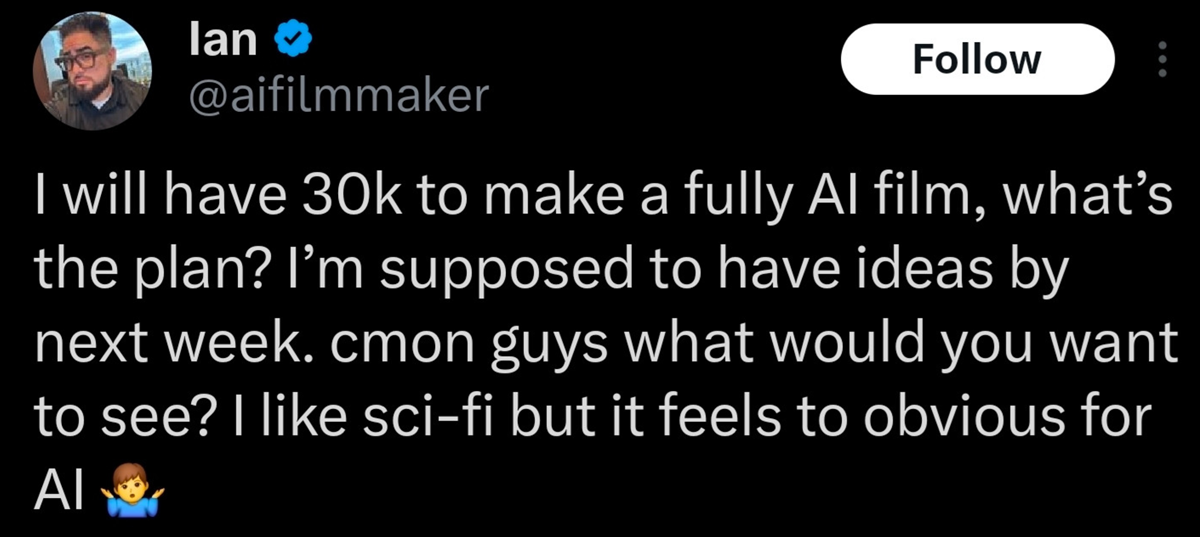

The reality, as well, is that the people who are excited about AI being able to do all these creative things they weren’t able to do, don’t really understand what the joy of discovery and creation really is.

The journey is the adventure

In a recent podcast on Xbox Tavern between Special Guest Pete and our esteemed editor (ed – hi), they alighted on the recent controversy of Expedition 33 using AI assets as placeholder in their game, and how the team may have used AI in early concepting.

I apologise to SGP, but his broad dismissal of this controversy as ‘getting the creative juices flowing’ makes me angry. I understand the reasoning to wash away this minor infringement but, like I already mentioned, everyone involved in this sort of thing has been lying (or backtracking) about this stuff at every turn when they feel like they can get away with it.

For me, the crux of it is that the people that engage in their craft find delight in all parts of it. The people trying skip one of the steps of the creative process make me highly suspicious of their motivation to be part of it.

This article took me weeks of hemming and hawing about what it is I was trying to say. I do not think I got everything right, I would never claim that (and I went back and forth with an editor on what to cut). There was also months of absorbing conversations, reading blog posts, getting a scent on what I think is going on (which I still might be wrong about). All of that was a process – creative ideation, if you will – filled with a few elements of boredom and quiet moments.

That is what concept art is. They are first drafts, they are bouncing ideas off people, in some cases they are a lifetime of choices that then influence decisions. On top of that, concept artists have spoken up, talking about how they got hired after people searched for content that was similar to the ideas in the ones in their head.

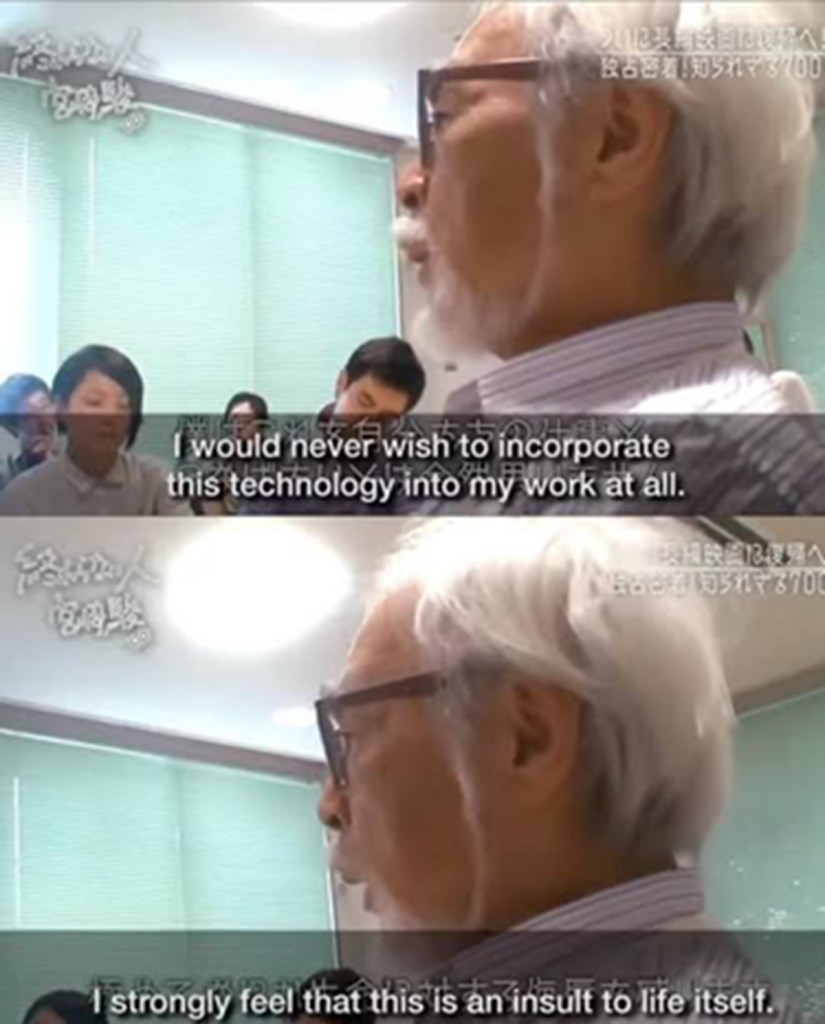

AI doesn’t bolster creativity, it stifles it. It will stop the newest people trying to learn, and it will fool people into thinking that they understand creative processes because they type in ‘anime girl with big titties’ and get a generic looking big-titted waifu. These people do not take joy in the process of making things, they see it as an avenue for making money.

If we let companies touting AI have their way, they’ll reframe everything that doesn’t support their current aggressive grab for your privacy, your hard work, and the theft of the world’s creative work as a modern day Luddite movement (I strongly recommend this article for what the actual Luddite movement was about).

If we sit back and act complacent as these companies gradually inch towards taking away as much from us as possible, the world will be a far worse place.

It is why I choose to make it clear that once I see something that is AI generated, I am instantly uninterested in the product. In its current iteration it is an existential threat to us from an environmental, financial and creative angle. Giving these companies even a modicum of the benefit of the doubt, when they have no problem lying to us over and over again, is only to invite your killer into the house.

They keep trying to tell us with GEN AI the future is here, but if we reject it, it doesn’t have to be.

Want to keep up to date with the latest Xt reviews, Xt opinions and Xt content? Follow us on Bluesky Facebook, Twitter, and YouTube.